-

Posts

55 -

Joined

-

Last visited

Reputation

6 NeutralAbout Multi-Sonik

- Birthday 01/01/1972

Recent Profile Visitors

2,721 profile views

-

Issue with Steinberg's ASIO driver messing up CWBBL devices

Multi-Sonik replied to Multi-Sonik's topic in Cakewalk by BandLab

Thanks everybody. All of your posts are very instructive. -

Issue with Steinberg's ASIO driver messing up CWBBL devices

Multi-Sonik replied to Multi-Sonik's topic in Cakewalk by BandLab

Thanks! I'll look into this. I wonder if I am the only one experiencing this... Anyone else here that also have Spectra Layers installed? Because it seems that only CWBBL have some kind of issue with it.. (I also have Reaper and Bitwig installed... and I can select any of my available ASIO devices without any issues... no lag... all clean!) Anyways, happy holidays everybody. -

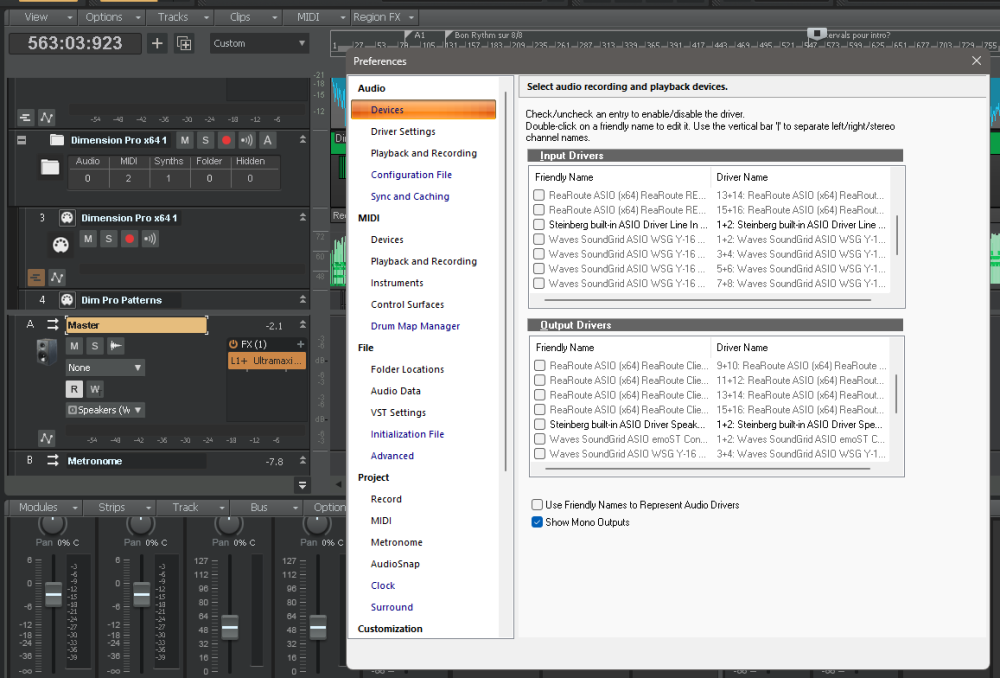

Hello guys. I recently bought Steinberg's SPECTRA LAYERS and installed it on my DAW. I noticed that the program also installed its own ASIO DEVICE for my system to use. I also updated CWBBL to its latest build (So, that is TWO system changes...) Now, when trying to open CWBBL, it always tries to use that Steinberg ASIO device (even if I never selected it...) The preference's interface lags a lot (and in fact, all of CWBBL lags now...) Still in PREFERENCES, even if I can finally manage to UNcheck Steinberg's device, CWBBL does not allow me to re-select the ASIO device I really want to use. (Either my Waves SOUNDGRID asio driver or even the ReaRoute asio device...) System lag is still very annoying... What should I do? Is there some kind of way to make CWBBL clear / rescan the available ASIO devices without first selecting Steinberg's one?

-

Hello everyone. With CWBBL version 2024.02 (build 098), my system is behaving weird recently. Is anybody else experiencing similar issues? Sometimes the control surface does not load correctly in my projects altogether Sometimes projects are opening fine, but will loose connection to the control surface later on MIDI inputs on a selected track (midi or instrument tracks) to record midi data for virtual instruments are not reacting at all (midi meters on track dead) BUT the MIDI ACTIVITY icon on windows' (little keyboard icon with 2 red lights) are reacting to my playing (expected). I'm using a Behringer MOTOR49 And said controller is fully working as expected with other DAWS or Standalone synths installed on my system. Thanks!

-

LA2A / LP EQ / LP MB re-activation thread?

Multi-Sonik replied to Multi-Sonik's topic in Instruments & Effects

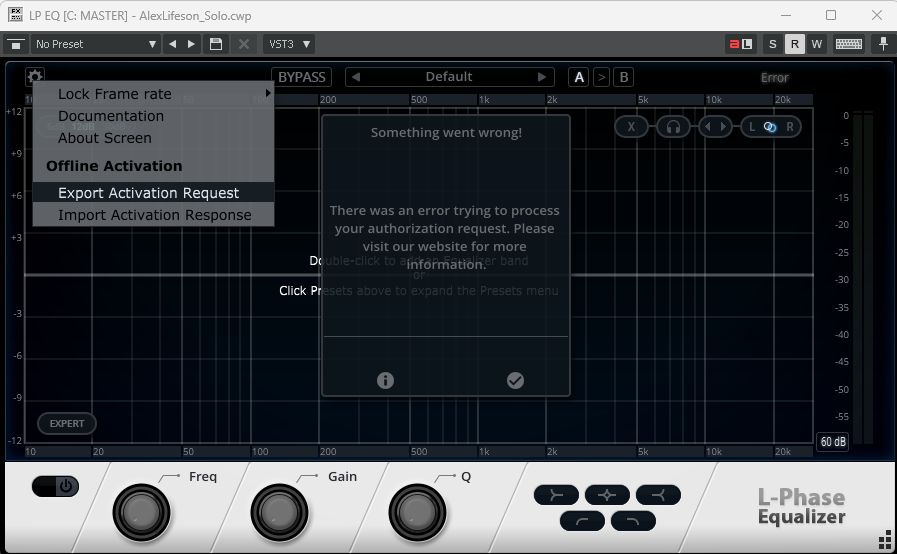

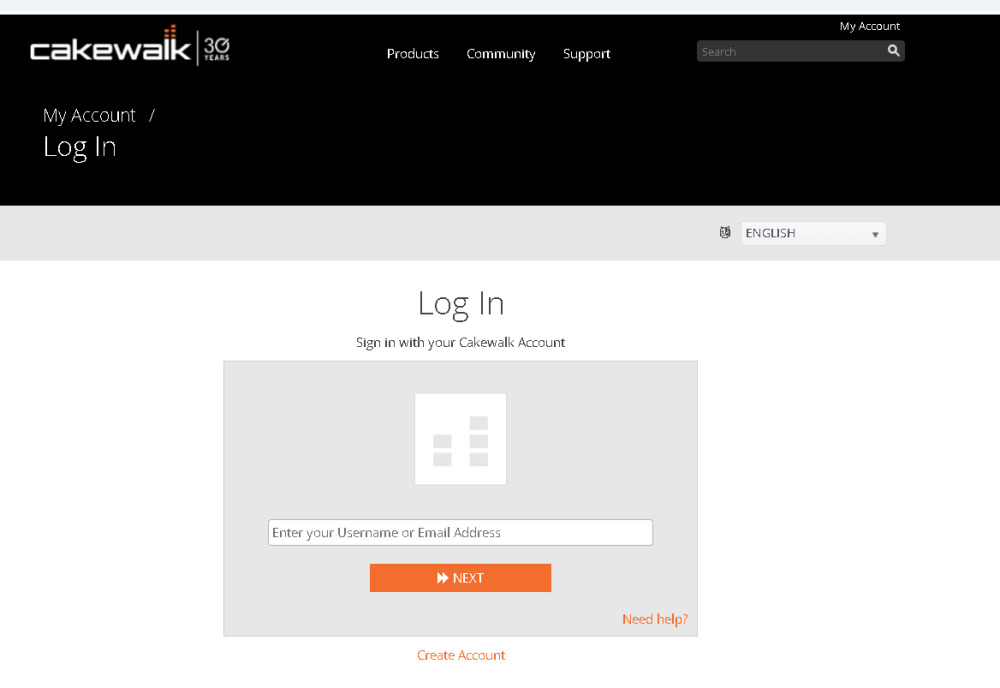

Thanks! I had already recovered my old serial numbers a couple of months ago. But, I did finally found how to proceed... I did remember something about offline activation requests / response, but could not find where to initiate it anymore. In the LP plugins, it's from the cog menu (top left-hand corner). From there you: generate a activation request file. Upload it on the legacy site at https://legacy.cakewalk.com/My-Account/Offline-Activation download the activation response files re-import them in the plugin thru the same cog menu For the LA2A, the menu is hidden top right-hand corner Hope this helps. -

Hello guys. Sorry about this stupid question, but I am searching this forum for the thread explaining how to reactivate these cakewalk plugins... But I have not yet found it. I've stumbled on the general CWBBL activation thread but it only seems to address the main DAW activation itself. TIA and happy new year to you all!

-

Hello everyone. I am attempting to archive locally a full, complete, installer of CWBBL for later use. Is this event possible / legal? Asking because I am testing a fresh install in a Windows Sandbox and I can see that the installer still downloads files from the net during installation. So, I am afraid that those files might not eventually be available in the future. Reason I am doing this is that I am not planning to upgrade to the new SONAR this year (as I am pretty sure the price range will be around 400-500$) , but I still want to open my past projects . I am also not planning to be buying "new" computers anytime soon (just used ones... powerful enough for me and my little projects), So i'm going to stick to Win 10-11 for a looooonnng time without any negative impact on my projects / usecases. So I basically want to make CWBBL available to me until... the end? ? (Over 50y over here... It is what it is...) Thoughts? Recommandations? Thanks!

-

Hello Everyone. Does anyone know how can I contact the support team for the legacy.cakewalk.com site? The links presented on the site to email support are not working for me and I cannot use the direct "contact support" web interface because it requires the I log into my account beforehand. So I'm kinda stuck. I am a Cakewalk user since the 1990 all the way thru SONAR PLATINUM and CWBBL. I recently tried to use these LP plugins, originally included in SONAR PLATINUM, in a project today and they are not activated anymore it seems. I'm trying to login to legacy.cakewalk.com to re-authorize, but none of my passwords are working and I am not receiving any of the password reset emails I am attempting to get. Thanks in advance.

-

Hello everyone. I'm trying to set up a MATRIX project where i'll be using 2 short stereo audio files / loops triggered by COLUMN A... this works fine. BUT I'm trying to put 3 stereo files (about 17 minutes of duration each) in COLUMN B. These files are 3 distinct audio environments design to play at the same time, over 3 independent audio outputs. So far, it is not working properly. These three files are being "warped" / Grooved-clipped somewhat. This, even if I think i'm doing everything that should be done to prevent this. In details: Clips are not marked as loop or tempo strech/follow tempo when i'm looking at the CLIP property pane before importing to the Matrix. In the MATRIX view, global loop is OFF When importing the clips, they get the "one shot" symbol on the cell (not the loop one) Even then, I can hear audio artifacts usually related to "groove looping" / Time matching on playback. When i Double-click on the matrix cell that contains one of the long audio clips, to have a look in the LOOP CONSTRUCTION VIEW, file is unexpectedly marked as loop and I can see slice markers... When disabling the LOOP option in the loop construction view (attempting to confirm this file is to be treated as a one-shot), the file (or matrix cell that contains it) is not launching playback at all when i click on it from the MATRIX. ... Soooo.... has anyone used successfully long audio files as ONE SHOTS in the MATRIX View or not? What am I forgetting to do in my preps here if so? Thanks.

-

Feature Request - Matrix View Upgrade: Live Looping Capabilities

Multi-Sonik replied to Phoenix's topic in Feedback Loop

+1 ! In fact, just to be able to edit more easily midi data within matrix cells would already be cool. Last time I've checked (and I confess it has been a long time since...), I had to replace the cell's content to update to my taste. I hope I have not just overlooked something in the Matrix's workflow that might be already there though...- 17 replies

-

- 1

-

-

- live looping

- looping

- (and 15 more)

-

Soundgrid ASIO in available audio devices ?

Multi-Sonik replied to Multi-Sonik's topic in Cakewalk by BandLab

Thanks for your help! I did check out the items you listed and I also went back to basics a couple of days ago and completely uninstalled all of my WAVES components and reinstalled them all again. Something somehow must have went wrong when I first tried to "update"... Once again the "from scratch" approach saved the day. All good now. Regards. -

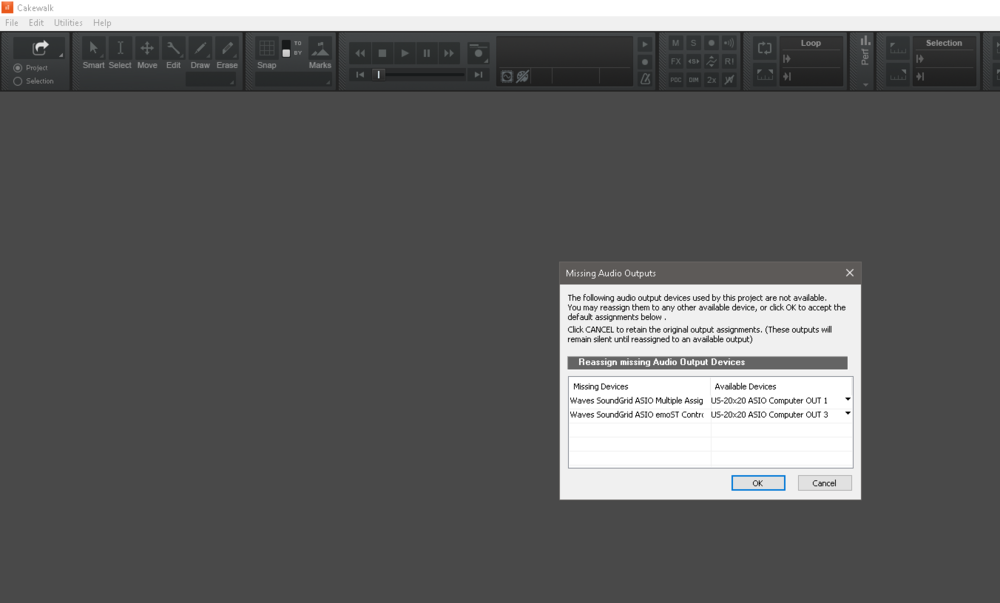

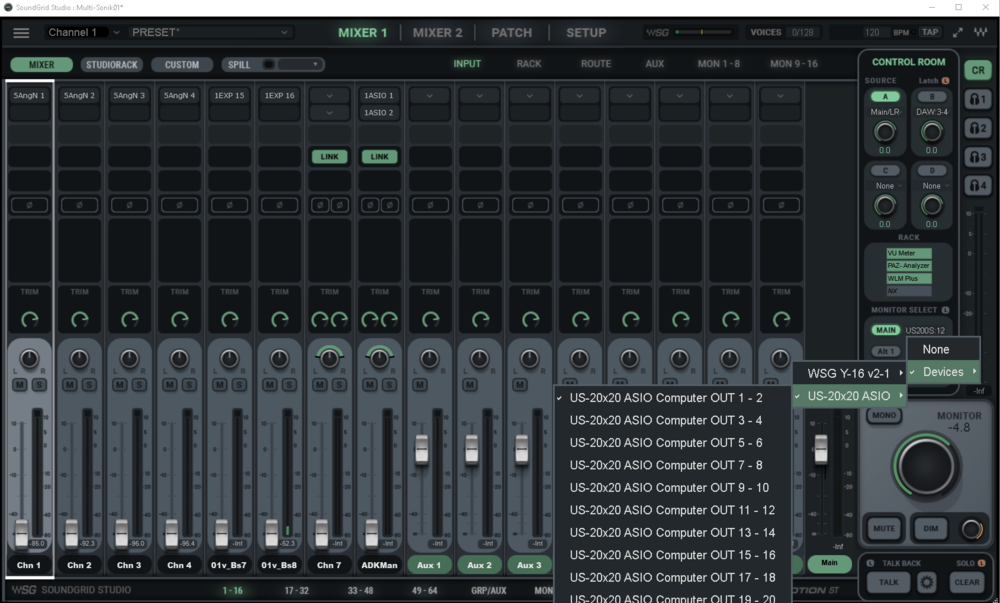

Hello group. I'm having issues running CWBBL with my Waves Soundgrid Studio. I recently did updates (softwares, device's firmwares) in order to do some cleanup and use mainly Waves V13 . But now, CWBBL (and my other DAWs) are not detecting the SoundGrid ASIO DRIVER. It is not showing up in the available audio devices' list in Preferences. Beforehand I could see both ASIO DRIVERS from Waves Soundgrid (and my Tascam us2020 that is integrated with my Soundgrid system). Not the case anymore as you can see below: When selecting the Tascam's audio interface it is not working. (Playback starts but no sound can be heard, no VU meters are active and I cannot stop the playback... I have to kill CWBBL via Windows' Task Manager). This behavior was always the case on my system, But I just thought that since Waves SG ASIO driver disappeared I should just switch to the Tascam's asio driver. Did not work, as I said. Fun fact: when searching in Waves Manual for something I might have overlooked, I came on a section that was saying that in order to properly use STUDIO RACK for low latency monitoring when recording with a DAW, the DAW should use the audio interface's driver... Now I am floored as I think it never really worked like that over here... I think! Lol. So, How are you guys configured regarding your selected ASIO DRIVERS in CWBBL when used within a Soundgrid system? My Soundgrid setup seems to detect all my components correctly, but somehow the Soundgrid ASIO driver that, I think, used to be available to the Daws is just not there anymore... Posting some other captures... for context... not sure if really relevant though... Lol. Posting this I guess because I'm wondering if my setup / patch is somehow generating a clock-related issue that could make the SG asio driver "disappear" ... but I think it is very unlikely... I'm monitoring thru the US-2020 computer out 1-2 (connected to my speakers).

-

Arrangements: suitable for a high number of sections?

Multi-Sonik replied to Multi-Sonik's topic in Cakewalk by BandLab

In my first attempts, it did not exactly behaved like that... But I might just have overlooked something. I'll try again!- 6 replies

-

- arranger track

- section

-

(and 1 more)

Tagged with:

-

Arrangements: suitable for a high number of sections?

Multi-Sonik replied to Multi-Sonik's topic in Cakewalk by BandLab

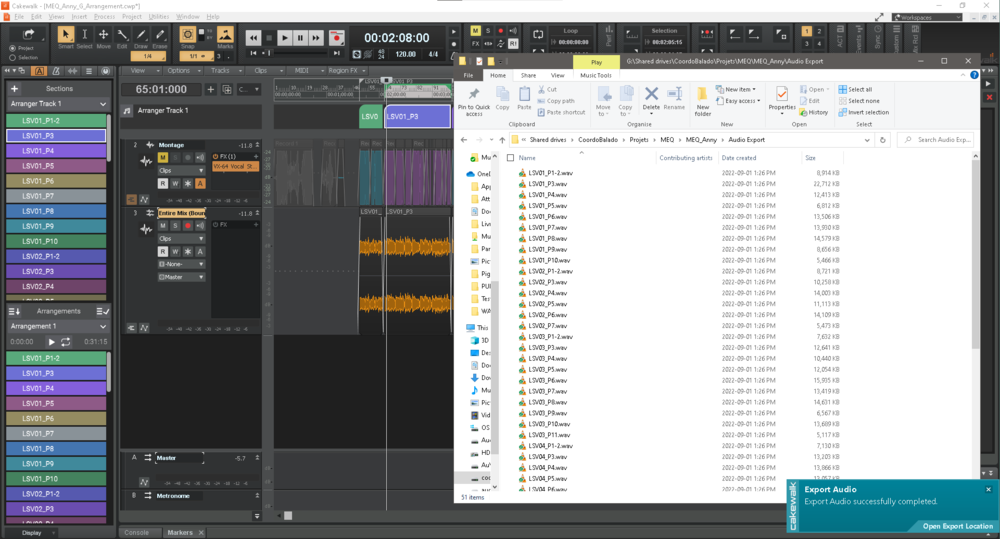

... and just for the sake of the discussion, here is what I had to manually do in order to get today's job done. I did encounter other problems in my attempts to use the arranger features... Did a BOUNCE TO TRACK of my completed editing job Selected the whole timeline and used the SPLIT CLIPS AT BOUNDARIES from the ARRANGER TRACK (to have my new bounced track splitted accordingly) Manually copied/pasted the arranger track's SECTION NAMES to update my CLIPS name (on the bounced track) Did an EXPORT AUDIO using only my selected bounced track using CLIPS as export source... ... But wait! I have not yet listened to any of those exported clips... might be in for another surprise... Doing some listenin' right now... Regards.- 6 replies

-

- arranger track

- section

-

(and 1 more)

Tagged with: